What Don't We Know?

The 10 urgent questions to answer about how AI will reshape relationships

In just three years, AI has gone from novelty to pervasive reality — woven into how young people do homework, rehearse hard conversations, process breakups, and sometimes, talk to something they’ve come to think of as a friend. It’s rapidly becoming the quiet partner of a generation.

A month ago, we published a report on how 2,400 young people are actually using AI and the impact it is having on their relational worlds. It painted a more grounded and nuanced picture than the public conversation tends to allow — and challenged many of our assumptions.

But it also prompted as many questions as it answered. It pushed us to pause and ask: what don’t we know?

The study illuminated a powerful snapshot of what AI use looks like today, but left us wondering, what will be the lasting impact of all the ways AI is quietly infusing itself into daily life?

So we turned to 37 of the brightest minds working at the intersection of AI, youth development, and human connection — researchers, clinicians, educators, technologists, and youth leaders — and asked:

What’s keeping you up at night? What do we need to understand if we want to shape the best possible version of AI’s role in young people’s relational worlds?

In a moment when it’s easy to get lost in how fast things are changing, these experts offer an incisive map of what we still need to understand to make informed choices about the world we want.

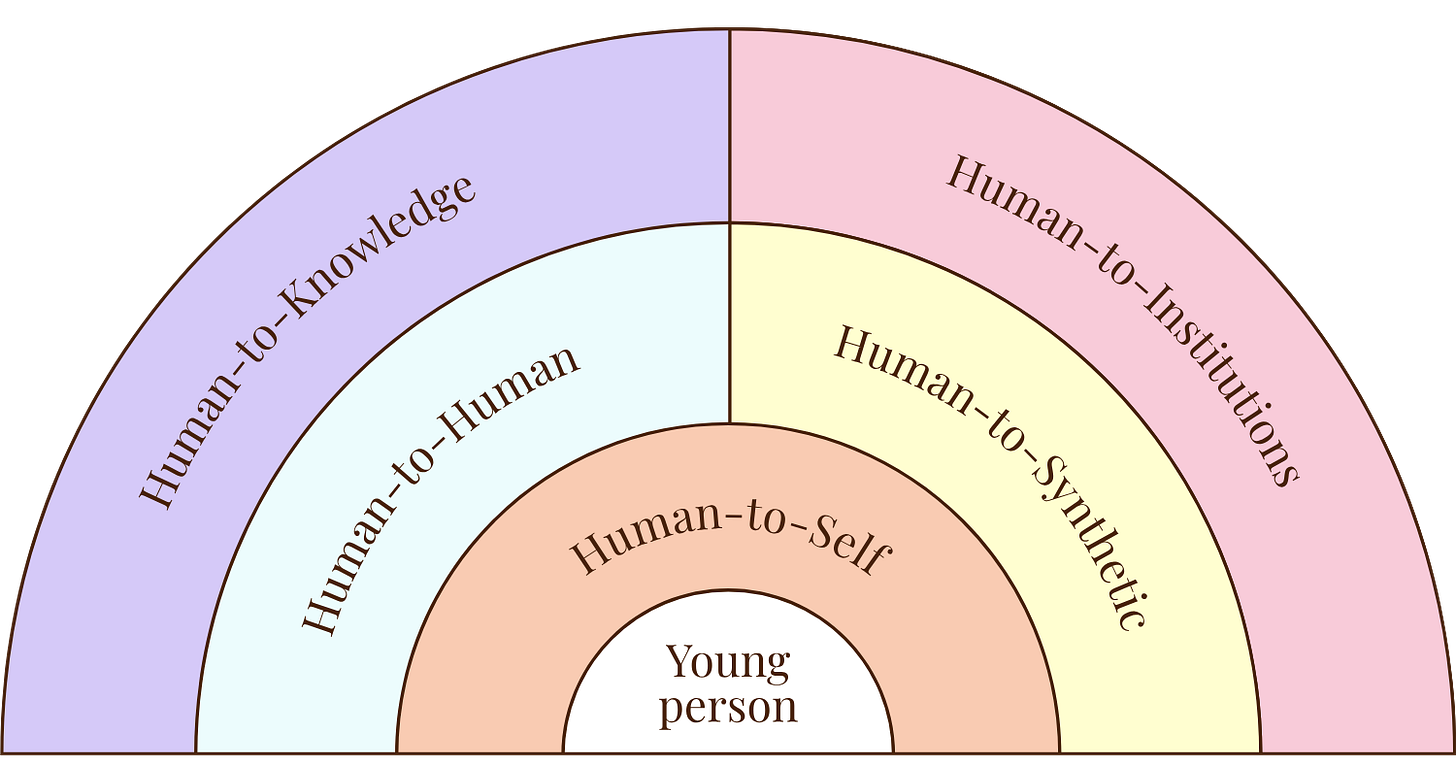

Reshaping Five Domains of Relationships

As we dug in with this group of experts, we quickly realized we had to widen the aperture of how AI will interact with our relational worlds. The patterns they were tracking and the questions they kept returning to pointed to five dimensions of our relational lives:

In each of these dimensions, AI is poised to reshape the fundamental nature of the relationship —in ways we’re only beginning to understand. The right questions are where understanding starts.

1. Human-to-Self: Identity, Agency, and Inner Development

Your teens and twenties are a critical developmental window for forming a sense of who you are. For today’s young people, that journey is now unfolding alongside a powerful, often helpful, but rarely light-touch companion. What happens to the process of identity development when it’s braided with a technology designed not only to help you act in the world, but to help you understand and author who you are within it?

What we need to understand:

How does engaging with AI change one’s sense of identity, agency, and self-trust?

What are the developmental effects of AI use on “self-knowing” skills like self-reflection, resilience to ambiguity, and independent reasoning, and how soon can those shifts be detected?

2. Human-to-Human: Connection, Substitution, and Relational Muscle

The skills that matter most for healthy relationships — asking for help, navigating conflict, sitting with discomfort, being vulnerable — develop only through practice with other people. AI now offers young people a way to rehearse those moments, or skip them entirely. What happens to the muscle of human connection when it can be outsourced to a tool that always responds, never judges, and asks nothing in return?

What we need to understand:

Under what conditions does AI use strengthen, mediate, or displace human-to-human connection?

In what ways does AI use impact the development of core relational skills (such as emotional regulation, perspective-taking, and help-seeking) in young people?

What unmet relational needs lead young people to turn to AI, and what does that reveal about the environments around them?

3. Human-to-Synthetic: Companions, Characters, and Embodied AI

AI companions are no longer hypothetical. Young people are already turning to AI systems during moments of loneliness, boredom, or distress, and these systems are designed to respond, remember, and simulate emotional presence in ways that feel genuinely relational. As they become more personalized, embodied, and commercialized through wearables and robotics, the lines blur further. What does it mean developmentally when one of a young person’s most trusted confidants is a product?

What we need to understand:

Does AI-mediated emotional support produce outcomes and attachment dynamics comparable to human co-regulation and relationships?

What are the developmental and relational implications when young people form emotional attachments to AI systems, particularly as those systems become more personalized, embodied, and commercialized?

4. Human-to-Knowledge: Access, Literacy, and Learning Inequality

AI is entering the learning ecosystem as a new actor that can explain anything, summarize everything, and do the hard intellectual work in seconds. For some, it expands access to learning support that was once available only to the privileged few. For others, it bypasses the very struggle that learning requires.

The relational implications may be profound. Learning has always been a social practice — meaning-making among peers, mentors, and educators. Decades of research show how much belonging, collaboration, and even disagreement shape how young people think and grow. We’re on new terrain when it comes to understanding how a technology this capable and this personified will reshape those relationships.

What we need to understand:

How does AI influence learning dispositions like intellectual curiosity, persistence, and motivation, and how might those dispositions reshape AI use in return?

How does access to AI shape the distribution of opportunity among young people, and through what mechanisms does it reinforce or disrupt existing inequities?

5. Human-to-Institutions: Trust, Authority, and Shared Reality

Young people learn what’s true and who to trust through relationships — with parents, teachers, schools, media, and the broader institutions that shape public life. AI is now entering that ecosystem as a new kind of authority. It answers faster than a parent, sounds more confident than a teacher, and filters the information that used to come from journalists. It’s becoming an intermediary layer between young people and the world — one that increasingly presents itself as the source of truth, and produces content that’s harder and harder to distinguish from human-made information.

As young people grow up with AI as a trusted source, what happens to the institutional relationships that have always taught us how to evaluate truth, recognize expertise, and share a common reality?

What we need to understand:

How does AI use shape how young people develop trust in people, institutions, and information, and how do they learn to navigate conflicting sources of authority?

Questions worth carrying

These are big questions. But they are not unknowable questions.

The current trajectory is to let technology proliferate first, and try to understand its impact on young people decades later. That’s the pattern we followed with social media, and it’s the pattern we’re at risk of repeating now, faster and on a larger scale.

There’s a term for this dynamic: the wisdom gap.

It names the widening distance between the speed at which transformative technologies enter our lives and the much slower pace at which we develop the understanding, ethics, and infrastructure to use them well. Every generation has lived with some version of this gap. But for today’s young people, it’s the relational fabric of their development that’s being shaped inside it.

The good news is that we can choose to narrow it.

We can do it as a field — by investing in the research infrastructure needed to actually answer these questions, breaking down silos between disciplines that each hold different pieces of the puzzle, and learning alongside the young people who are living this in real time, not as subjects but as collaborators.

And we can do it in our daily lives. These questions aren’t only for researchers — they’re for parents, teachers, mentors, and friends. They’re worth noticing in ourselves and exploring openly with the young people we love, teach, and learn from.

The poet Rainer Maria Rilke wrote, in Letters to a Young Poet: “Be patient toward all that is unsolved in your heart and try to love the questions themselves… Live the questions now. Perhaps you will then gradually, without noticing it, live along some distant day into the answers.”

The questions in this report deserve to be lived with. To be carried, debated, and worked on — together, openly, by the people whose lives they will most shape.

We hope you’ll carry them with us.

Want to go deeper?

Everything in this post draws from our full report— The Questions We Should Be Asking Now: on AI, Youth, and the Future of Relationships — which includes detailed findings from all 37 conversations, expert quotes, the complete research questions, and our analysis of what the field needs to move forward. Read the full landscape scan here.

This landscape scan was authored by Changemaker Network member Amy Blankson — Co-Founder of the Digital Wellness Institute, speaker, and best-selling author. She also writes on Substack in her publication Humanity, in the Loop.

The Rithm Project would like to thank the following individuals for their generous contribution of thought leadership:

Angela Duckworth, PhD, Professor, University of Pennsylvania

Erika Anderson, Founder & CEO, Building Humane Technology

Evan Michael Bennett, PhD Student, University of Pennsylvania

Pamela Cantor, MD, Co-Founder and CEO, The Human Potential L.A.B.

Susan Cain, NYT bestselling author of QUIET and BITTERSWEET

Sandra Bond Chapman, PhD, Founder and Chief Director, Center for BrainHealth, Distinguished Professor, University of Texas at Dallas

Dylan Thomas Doyle, PhD, Executive Director, All Tomorrows Institute

Shereen El Mallah, PhD, Faculty Affiliate, Youth-Nex and the Center for Advanced Study of Teaching and Learning (CASTL), University of Virginia

TeRay Esquibel, Executive Director, Purpose Commons

Julia Freeland Fisher, PhD, Director of Education, Clayton Christensen Institute

Sara Filipčić, Founder and Director, Institute for Humane Robotics

Jacqueline Gamino, PhD, Director, Adolescent Reasoning Initiative at the Center for Brain Health, Assistant Research Professor, University of Texas at Dallas

Hannah Groos, Student, Duke University

Thao Ha, PhD, Associate Professor of Psychology, Healthy Experiences Across Relationships and Transitions (@HEART) Lab, Arizona State University

John C. Havens, Global Director, IEEE Planet Positive 2030

Isabelle Hau, Executive Director, Stanford Accelerator for Learning

Ben Houltberg, PhD, President and CEO, Search Institute

David Jay, Executive Director, Relationality Lab

Marisol Jimenez, MS Candidate, Stevens Institute of Technology

Ann Kim, Co-Founder, The Together Project

Alex Kotran, Founder & CEO, aiEDU

Tamara Lechner, Chair, AI for Human Flourishing (Harvard Human Flourishing Network)

Damion LeeNatali, President, TRAILS

Anne Maheux, PhD, Assistant Professor, University of North Carolina

Caitlin Morris, PhD Candidate, MIT Media Lab

Jacqueline Nesi, PhD, Founder, Techno Sapiens, Assistant Professor, Brown University

Desmond Patton, MSW, PhD, Founding Director, SAFELab, University of Pennsylvania, Professor, University of Pennsylvania

Michael Preston, PhD, Executive Director, Joan Ganz Cooney Center at Sesame Workshop

Yvette Renteria, Chief Program Officer, Common Sense Media

Michael Rich, MD, Founder and Director, Digital Wellness Lab, Boston Children’s Hospital

Brooke Stafford-Brizard, PhD, Senior Vice President, Carnegie Foundation for the Advancement of Teaching

Sean Talamas, PhD, Founder and Managing Partner, Leadership Cooperative

Beck Tench, PhD, Principal Investigator, Project Zero, Designer and Researcher, Center for Digital Thriving, Harvard Graduate School of Education

Haley Valdez, University of Southern California Graduate

Chris Wegemer, PhD, AI Policy Fellow, Mila, Quebec Artificial Intelligence Institute

Leo Wu, Co-Founder and President, AI Consensus

Peggy Yin, PhD student, Stanford University

The views expressed are those of the individual and do not necessarily reflect those of their employer or affiliated institution.

The same way a car simulator cannot teach actual driving, AI cannot teach actual relationships.

But it may serve as a playground. A place to brainstorm, practice the language, process what happened. I tell my patients to treat emotional AI chats as a sounding journal — think it through, find the words, then go live it.

I work in an inpatient unit. Most of our patients genuinely feel better just offloading some feelings onto a stranger and having quiet, screen-free time to process their lives.

AI can guide that kind of reflection. A sophisticated, infinitely patient sounding board.

But it cannot replace another person. Because AI has no personality. No steaks in the conversation.