How to Clone Yourself

When AI agents do the work and the thinking, what do we choose to keep for ourselves?

On February 4th, 2025 I cloned myself for the first time.

This wasn’t the fully realized science fiction version of cloning — an equally handsome, slightly off-brand version of Nate wasn’t still sitting at my computer while I put in my headphones, left my office, and embarked on a run. Yet in my mind something similar was happening: I was happily running through my neighborhood park, listening to my audiobook, while simultaneously conducting in-depth research on a prospective funder for my non-profit.

Two days earlier, ChatGPT had released a new tool called “Deep Research,” describing the feature as “OpenAI’s next agent that can do work for you independently—you give it a prompt, and ChatGPT will find, analyze, and synthesize hundreds of online sources to create a comprehensive report at the level of a research analyst.”

I didn’t fully understand what an AI agent was when I typed in “give me a detailed synthesis of the funding priorities, values, and behaviors of [a prospective funder], hit enter, and walked away. Yet for the first time in my life, AI was doing lengthy, complex work without needing my input. The work was so lengthy and so complex that it didn’t make sense to stick around and watch it, so I checked the weather and grabbed my headphones.

The Tab That Works While You Don’t

A little over a year later, I understand that I was experiencing what’s now become a defining shift in how AI works, one that’s changed not just what I can produce but how I think about what’s worth doing myself.

If you’ve used ChatGPT, Gemini, or Claude, you’re probably used to a back-and-forth exchange in which you type something, the AI responds, and you type again. AI agents work differently than this. When provided with a goal, agents figure out how to accomplish it then work away, sometimes for hours, without you in the loop.

My simple “research this organization” prompt was nothing compared to what today’s AI agents can handle. That same task, which took Deep Research about twenty minutes in early 2025, could now be handed to an agent as part of something far more ambitious, such as “Research the top 50 family foundations funding youth mental health initiatives, compare their giving priorities and application processes, identify the 10 best fits for our organization, draft a preliminary outreach strategy for each, and build these into an interactive dashboard.”

The gap between my 2025 research task and what’s possible today isn’t just anecdotal.

Researchers at METR have been tracking what they call the “time horizon” of AI agents: the length of task an agent can reliably complete without human help. Over the past six years, that horizon has been doubling every seven months. Recently, the pace has accelerated to doubling every four months. If the trend holds, agents capable of independently handling week-long projects are not years away - they’re months away.

The Moving Target

Along with growing capabilities and time horizons, I suspect the social and psychological impact of this technology is changing faster than we can keep up with. Over the last year, as I’ve used agentic AI for more and more tasks, my expectations of its (and by extension my own) outputs have foundationally changed how I think about productivity.

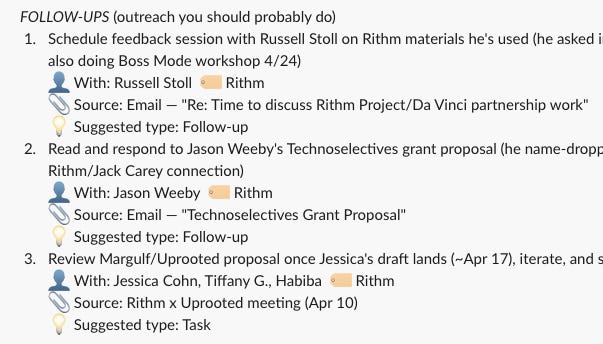

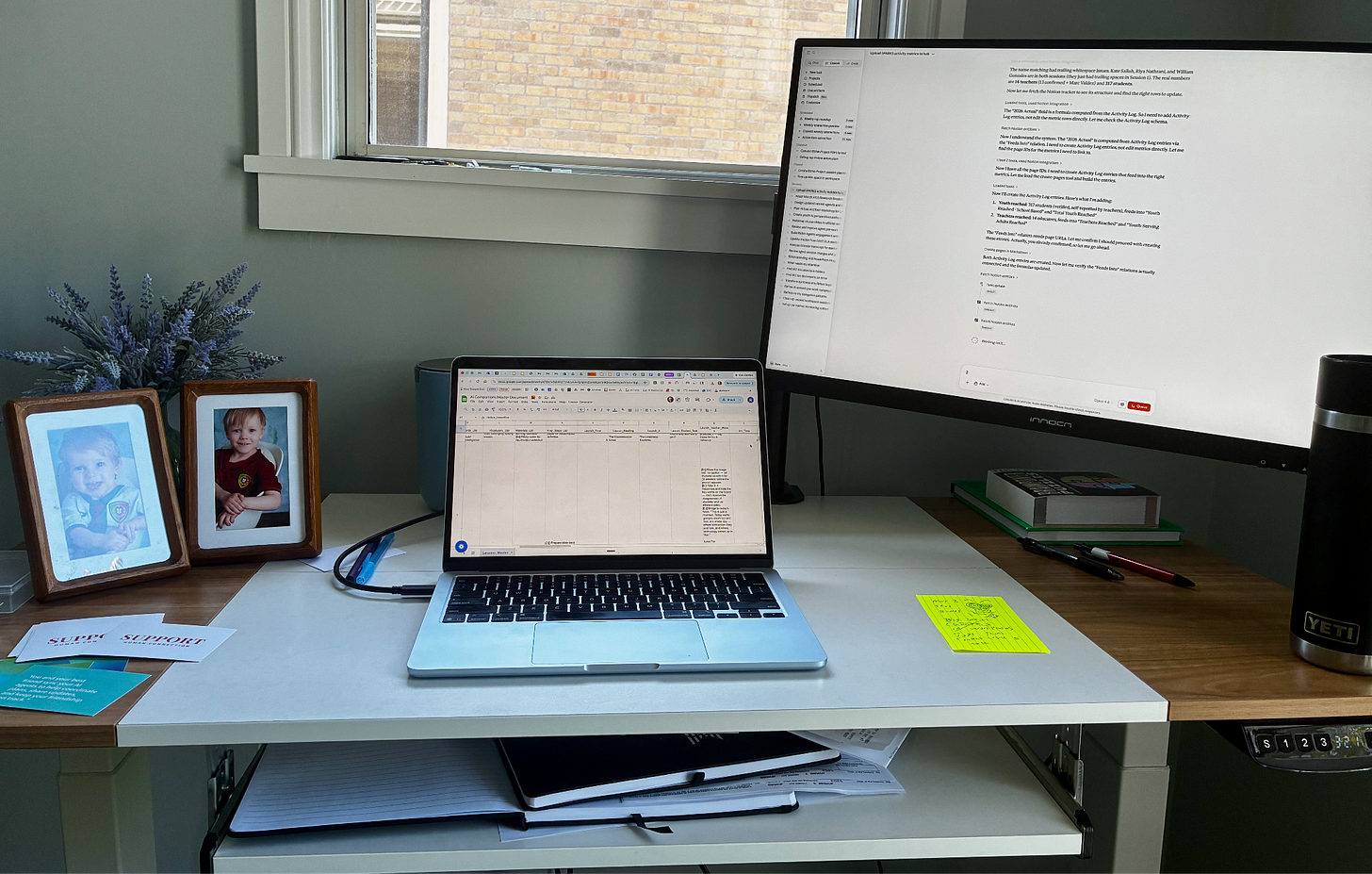

AI works on a task in one tab or app while I simultaneously work on another. A growing array of automated tasks now run while I sleep or put my kids to bed, including a comprehensive summary report of to-dos pulled from my emails, meeting notes, and Slack messages, and a Sunday evening “rap report” that researches then emails me and a close friend the latest rap music to check out.

I remain elated by this technology, I still feel like I’ve cloned myself, but the more I expect AI to think and work for me, the more that cloning has become a baseline expectation of what I can, and must, produce on a daily basis.

I now catch myself thinking “I don’t have AI working on anything right now” as if this is some new form of productivity failure. If two or more of me aren’t getting work done, am I really maximizing my working hours?

That shift shouldn’t be a surprise. Every tool that was supposed to save us time has raised the bar for how much we’re expected to produce instead. Email created an expectation of constant availability. In retrospect, it feels rather silly that we thought Slack, or any other “streamlined collaboration” product would reduce rather than increase the barrage of “urgent” work expectations at every waking (or resting) hour of the day.

Economists call this the Jevons Paradox: when a resource becomes more efficient, we perceive that it has also become more useful, leading us to greatly exceed our prior overall use. I write ‘useful’ loosely here. These tools either genuinely help us or just make us feel productive, and in our culture I’m not sure we can tell the difference.

There is an optimistic and intuitive counter to all of this: if agents do our work for us, we’ll finally have time to connect, to live, to be more fulfilled humans.

I’ve heard that vision from AI enthusiasts and hopeful young people alike, and I hope they’re right. But nothing in our track record suggests we’ll choose leisure and connection over work-based outputs unless we choose this moment to truly fight for those shifts. It didn’t take long for me to view agent-use less as freedom from work and more as a work multiplier I couldn’t afford to leave idle.

What Stops Being Yours When You Give it Away?

I recognize that being an eager early adopter of AI agents says things about me that I’m both proud of and concerned about. My instinct to grab the newest tool comes from some mix of genuine curiosity and a deep fear of being left behind. Your appetite and motivation for using these tools may look a bit different than mine. But as agents become easier to use and harder to ignore, more people will attempt their own versions of cloning. I suspect the psychological pattern I’ve experienced won’t be unique to me either.

This matters more, and differently, than our previous changes to daily productivity. AI agents are distinct because of what they offload. Email and Slack changed the logistics of our work: how fast we could send a message, coordinate a meeting, or share a file. Agents take over the work and the thinking itself.

When I ask an agent to analyze a folder full of data files and produce a summary analysis, I’ve handed it both a large amount of tedious work that might not feel important to me (cleaning data, checking formulas) and the very thinking I would have previously described as my value-add (interpreting ambiguous patterns, prioritizing what matters, organizing information for a specific audience).

Much of that work, whether seemingly tedious or engaging, was also social. I’ve sat through many meetings in my career in which groups review data together and share headline takeaways. An agent can likely produce better, more objective analysis faster. And yet, the value of those rooms was never purely about the output. People were building shared understanding, reading each other’s reactions, developing trust in each other’s judgment. When I hand that process to an agent, I don’t just get back time, I also lose a reason to be in a room with other people.

The cloning logic in my brain tells me, “now you can do other meaningful work!” or “go for a run!” “You haven’t offloaded your abilities, you’ve multiplied them!”

But what if the agent doing that analysis is better at it than I am, and what if the next generation of agents are better still? Where do I draw the line between what I do and what I hand off?

Productivity logic won’t answer that question for me. I’m going to need a deeper, more grounded understanding of what is mine and therefore not to be handed off.

Building Fires

In his 1984 book “Technology and the Character of Contemporary Life,” the philosopher Albert Borgmann described the way technology delivers the outputs of an activity while quietly stripping away the experiences and benefits of that activity itself. We might celebrate the marvels of modern central heating without completely understanding that the process of building and tending to a fire gave us purpose, connection to those we lived with, and a deeper respect for our environment and surroundings.

I’m not chopping firewood anytime soon, but it feels clear that just because many parts of my day-to-day life could be handed to an agent doesn’t necessarily mean they should be. The questions for all of us become: which parts of your life stop being yours when you hand them off? What work builds something meaningful within you while you do it? And when you delegate that work and gain time –what are you also choosing to lose?

I don’t have clean answers to those questions, but I’m becoming aware of the ways we’re all watching each other in the middle of AI’s transformation of our world, doing our best to keep up, perhaps trying to keep our jobs, or just trying to understand what work and technology use should look like going forward.

There’s a FOMO logic spreading that has no room for this kind of discernment, one where every agent automation you’ve built or random site you’ve vibe-coded is a badge of productivity greatness.

I hope we can adopt a more grounded view in which those creations are seen as meaningful trade-offs, not to judge their creation but to become wiser about what we choose to do next ourselves.

That feels especially urgent for the young people who will live most of their lives in an agentic world. What do we hope the coming generations will build, automate, and hand off? What do we hope they keep for themselves, and why?

Dig Deeper on AI Agents and Their Impact

We built this exploration site to help our network explore AI agents. It’s built for anyone, regardless of your background or whether you’re using agents (or AI at all). Pick a profile (abstainer, conversationalist, experimenter, integrator) and complete an aligned activity building with agents or reflecting on their impact on your life.